The Fediverse is a great system for preventing bad actors from disrupting “real” human-human conversations, because all of the mods, developers and admins are all working out of a desire to connect people (as opposed to “trust and safety” teams more concerned about user retention).

Right now it seems that the Fediverses main protection is that it just isn’t a juicy enough target for wide scale spam and bad faith agenda pushers.

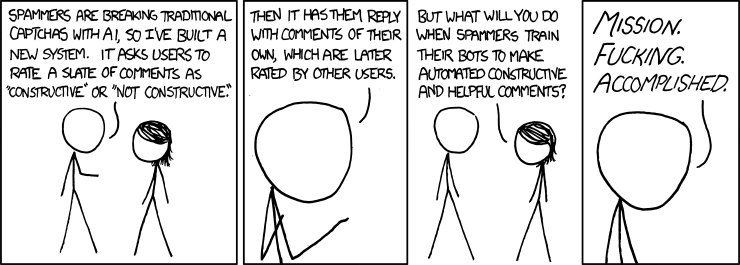

But assuming the Fediverse does grow to a significant scale, what (current or future) mechanisms are/could be in place to fend off a flood of AI slop that is hard to distinguish from human? Even the most committed instance admins can only do so much.

For example, I have a feeling all “good” instances in the near future will eventually have to turn on registration applications and only federate with other instances that do the same. But it’s not crazy to imagine that GPT could soon outmaneuver most registration questions which means registrations will only slow the growth of the problem but not manage it long-term.

Any thoughts on this topic?

What’s the incentive to operate an LLM on the fediverse that is truly helpful and not just trying to secretly sell something/push an agenda?

Well, I am not saying that the scenario is a perfect match, just that it reminded me of that:-).

Though to answer your question, if Reddit were all AI slop whereas we were not, then they would be foolish to not exploit (for moar profitz) the source of legitimately true info that could be useful to answer people’s questions, e.g. on topics such as whether and how to use Arch Linux btw. :-P

To train it to mimic genuine human behaviour for applications elsewhere.

Removed by mod

Removed by mod

There are two groups here, bots, and bad actors. We’ve found that these measures have mostly stopped them both.

Bots

- Registration applications. Its been extremely easy to differentiate bots from real people by asking a series of simple questions, and only let the real people in.

- Reports: so that mods / admins can see them quickly.

- Blocking open-signup servers that don’t have required applications, that usually serve as spam-attacks against the whole fediverse.

Some bots still get through occasionally, but not many compared to before. And some servers have more “lax” application questions, so they let more through.

Bad actors

- Registration applications. Most of the trolls are of a temperament where they refuse to do the work of answering questions earnestly. They can’t help themselves but give obviously trolling answers, if they do even bother to do that work at all.

- Reports: same as above.

- Ban + remove. Mods and admins can ban and remove all a person’s content at the click of a button. So even if the troll did the work of getting past the front door, then all their work is nullified by an action that takes less than 5 seconds. So they wasted much more of their time, than they did for admins, and accomplished nothing lasting.

Great response, thank you. My concern is more so focused on future measures; what happens if/when registration applications are answerable by a bot? It’s not hard to imagine. What happens when a GPT powered bot leaves totally “normal” unique comments 90% of the time, but occasionally recommends a product or pushes a political agenda?

All I can say is that in practice, bots can’t answer most simple questions in a believable way, especially questions that require actual personal opinions, or that require any context outside of what they were asked.

The most we’ve seen is that people created seemingly lemmy-specific signup bots, but they always answer questions in the same transparent way.

The blogspam bots that have gotten through (not for many months now here on lemmy.ml) are all transparent, because they all post links to the same domain. All it takes is one report, and we can remove their entire history.

That last one had better at least require two or three people to sign off on it. One shitty mod could easily become a bigger problem than a troll with that in power in hand.

It doesn’t, that’s up to the server / community to empower their own mods. Both ban and remove are reversible actions also.

Why are you putting up with a “shitty” mod? Are you trying to force your speech in a community who has asked you not to?

This is the kind of response id expect from a shitty mod :)

Blocked

how could i find out why my account keeps getting marked as a bot?

No one can mark an account as a bot one except you, so maybe its an app that keeps setting it.

i only use firefox; are the instance admins able to set it on your account? (because that would make sense)

when i first joined lemmy, i didn’t understand how it worked so i would sign up with one instance and; when i could no longer up/downvote; i switched to another instances. it eventually led me to joining .ml and it was here i learned about the bot account setting and saw that it was set on the old accounts that i don’t use anymore and i’ve always wondered why.

I’m not sure, but you could probably go into those other accounts and change them to non-bot accounts if you still have access to them.

Yes, that works; but I was only curious as to how it got set in the first place since I didn’t do it.

the toxicity of the reddit liberal instances has been making me delete the other accounts and also makes me hope that I can just stick w .ml.

Hi there! Admin of Tucson.social here.

I think that the only way the fediverse can honestly handle this is through local/regional nodes not interest based global nodes.

Ideally this would manifest as some sort of non-profit entity that would work with municipalities to create community owned spaces that have paid moderation.

So then comes the problem of folks not agreeing with a local nodes moderation staff - but that’s also WHY it should be local. It’s much easier to petition and organize against someone who exists in your town than some guy across the globe who happens to own a large fediverse node.

This model just doesn’t work (IMO) if nodes can’t be accountable to a local community. If you don’t like how Mastodon, or lemmy.world are moderated you have zero recourse. For Tucson.social - citizens of Tucson can appeal to me directly, and because they are my fellow citizens I take them FAR more seriously.

Only then will people be trusting enough to allow for the key element to protecting against AI Slop. Human Indemnification Systems. Right now, if you wanted to ask the community of lemmy.world to provide proof they are human, you’d wind up with an exodus. There’s just no trust for something like that and it would be hard to acquire enough trust.

With a local node, that conversation is still difficult, but we can do things that just don’t scale with global nodes. Things like validating a person by meeting them to mark them as “indemnified” on a platform, or utilizing local political parties to validate if a given person is “real” or not using voter rolls.

But yeah, this is a bit rambly, but I’ll conclude that this is a problem that exists at the intersection between trust and scale and that I believe that local nodes are the only real solution that can handle both.

lemmy.world are moderated you have zero recourse

???

I don’t particularly have any issues with them.

But if a user did, they don’t have much recourse. I’m talking about that as a structural aspect. Not a moral one.

But sure if you just want to claim this puts me in the [email protected] community by ripping it out from any relevant context, go ahead I guess?

I didn’t say you were power tripping.

I was mentioning that community as a way to handle power tripping mods.

It also works, [email protected] is being replaced by [email protected] after the admin started power tripping.

So it’s not just moral, it also has a real impact by allowing users to organize and switch communities

Oh okay! I’m sorry about the misunderstanding.

No worries!

Oh wow you are fast - I just commented with the identical example. :-)

Nice comment!

Fwiw, Blaze I’m sure was saying that the recourse could be to post the infraction there, so that people become aware of a “power tripping bastard”, i.e. the lemmy.world mod hypothetical example mentioned earlier.

Multiple times communities have been shifted from one instance to another due to precisely this effect. A recent example is how [email protected] now has an alternative [email protected] to help people get out from under the heel of the power tripping admin of that particular instance (described in a recent post in the [email protected] community).

“Power tripping mods” definitionally cannot exist on the fediverse where anyone can create an instance or community. Even on Reddit, 99% of the time someone said a mod was “power tripping” it was just a right winger upset that the mod removed their disruptive nonsense.

The purpose of communities like the one you linked to is to shame mods into employing a passive, generic bare-minimum style of moderation, when we should be encouraging the opposite if we want diversity in the fediverse.

Three examples from that community, where other people can discuss the moderation, and see whether it’s power tripping or not.

right winger upset

Right wingers aren’t that numberous of Lemmy, but when this happens it gets quickly disqualified by the people commenting

anyone can create an instance or community

Enjoy your empty community nobody cares about because people post on the one where most of the people are, where the power tripping mod is operating

Mods and admins on the Fediverse are not democratically elected, they have complete control. Accusing one of “power tripping”, in their own community, on the instance they presumably pay for, is not a rational accusation, since they definitionally cannot exist in a state of less power. What that community is trying to do is use the threat of public shaming to influence behavior. It’s how you get weak moderation and generic communities where bad actors can thrive. A community dedicated to “Stopping bad mods” sounds good on the surface, but it’s an argument made in bad faith.

The first sentence you wrote is either misleading or incorrect, and I think it’s important to reexamine. Each administrator has control over the instance they run, but they don’t have control over the Fediverse itself, and because it’s so easy for people to move to other instances, they have little control over other users.

Accusing one of “power tripping”, in their own community, on the instance they presumably pay for, is not a rational accusation, since they definitionally cannot exist in a state of less power

Mods don’t pay for the instance, they aren’t in charge of any of it.

Some admins have strong policies against getting involved into moderation of communities, leaving potential power tripping mods unchecked.

What that community is trying to do is use the threat of public shaming to influence behavior. It’s how you get weak moderation and generic communities.

- A community is the most popular on a topic, it’s by far the most active community on that topic across the whole platform

- The single mod, who was just the first one to create the community when everyone came to Lemmy, starts to power trip

- The admin does not want to intervene

- What solution do the users have besides organizing on a community like [email protected] ?

Power tripping mods can exist anywhere there are mods, even here. The rest of your point stands though.

It’s theirs. They can do whatever they want. Any limits their power within the instance/community is purely voluntary on the part of the owner.

Instance = admin, community = mod, but either can still power trip within the confines of their little worlds.

Thanks for the thoughtful response. I too think that regional instances would be ideal for a “backbone” of the social web. But at the same time, I feel that interest-based connection is a truly unique strength of the internet and it would be a sad thing to lose to the slop.

Ultimately, I think that more, smaller instances is likely the best “ultimate” defense against slop since there is no incentive for them to scale beyond their needs. But every instance admin is technically responsible for the content on all federated instances. Which can get overwhelming!

I mean, regional instances don’t have to stop folks from engaging primarily with interest based communities.

Some regions will dominate certain interests for example - here in Tucson we’re consider one of the Amateur Astronomy capitals of the world. If mander.xyz were to disappear tomorrow, Tucson would make a good home for all of the fediverse’s astronomy needs even though its a region based instance.

Further, there’s nothing that states an interest-based instance needs any registration. One could imagine a world where local instances have all the users and identities, and the interest based instances simply provide communities to the larger fediverse with no users of their own.

But yeah, it’s definitely a paradigm shift that makes interest based communities a bit more difficult to find.

Further, there’s nothing that states an interest-based instance needs any registration. One could imagine a world where local instances have all the users and identities, and the interest based instances simply provide communities to the larger fediverse with no users of their own.

Yes, I’ve had this same thought and I think it’s a great model! If it comes to pass or not remains to be seen. But the concept is good!

Maybe it was silently assumed but nobody so far mentioned the endless stream of scrapers that go through my probably juicy but private instance. I‘m banning a new bot every week and by now they have switched to distributed actions. I get over 400 requests per hour by a couple ips for the same stuff with changing useragents because I wrote automated detection mechanisms. I might just make my instance login only.

Instead of trying to detect and block it, just disincentivize it.

Most AI spam on social media tries to exploit various systems intended to predict “good” content on the basis of a user’s past activity, by tracking reputation/karma/etc. Bots build up karma by posting a massive amount of innocuous (but usually insipid) content, then leverage that karma to increase the visibility of malicious content. Both halves of this process result in worse content than if the karma system didn’t exist in the first place.

“The fediverse” really can’t. That’s just the reality of a decentralized system. It’s going to be up to individual instances to sort it out.

But that’s a good thing, because what it means is that different instances can and will try different approaches, and between them, they’ll sooner or later hit on the one(s) that will be most effective.

Any speculation as to what those tools might look like?

Ban it outright in the rules of individual instances, bully AI piglets for printing the lowest-value content online in the same way NFT goobers are ostracised, run AI image and writing detectors on suspect posts. The common denominator of any AI post is that it’s going to be shit and it should just be treated like someone repeatedly posting a Lorem ipsum copypasta or spam email.

I don’t have the foggiest idea.

And really, if I did have a good idea, I wouldn’t post it publicly anyway. That’d just be tipping my hand to the astroturfers.

I think that being human scale is largely the appeal of the Fediverse. Each instance isn’t meant to grow to the size of a centralized platform, but to be a relatively small community of people with some shared interests. I look at it similarly to the way IRC channels worked back in the day. You tend to have a group of people whom you interact with frequently and that’s how you know they’re human. If some bot enters the community then it becomes obvious very quickly.

The fediverse architecture was built from the beginning to allow instance-by-instance exercise of discretion to mute any systemic effects that could take over the network as a whole.

This was I think oriented toward limiting swarming behavior from trolls, but I think it also applies to AI bots.

Right now it seems that the Fediverses main protection is that it just isn’t a juicy enough target for wide scale spam and bad faith agenda pushers.

If you ask me they are already here right now, but I think it’s not the architecture of the fediverse, but the judgment of individual mods that have let us down in this case.

I don’t think there is any way to have a genuine “open forum” amongst complete strangers. There have always been human troll farms pushing narratives using sock puppet accounts, AI is just enabling it to reach new scales.

I actually am for echo chambers when it comes to social media, but one in which you only follow people you know or trust and ignore complete strangers and to make sure you get news and critical information from OUTSIDE social media, again with institutions you trust.

Yes, strong moderation by members of the community is sufficient to recognize and remove bad (human) actors. The question is one of volume and overwhelming those human mods. GPT can create hundreds of bad-faith accounts.

I have had similar thoughts, I think the answer ultimately lies in active mods that can really get to know a community and it’s users and identify when users are pushing a narrative even if they can’t confirm if they are a bot or not.

Also as @[email protected] pointed out, user registrations. On startrek.website we have a question that is easy for a star trek fan to answer but not easy for a bot (although getting back to your concern, chatGPT probably would have no problem)

deleted by creator

I fully agree. What worries me is if bad actors create bots that are able to overwhelm the human moderators.

Same way email handles spam …

What can be done? Smarter people can probably list plenty of things. But in the end, it’s a constant race trying to out compete. And with LLMs/AI, you can literally train it on the system you want it to overcome with that express purpose and let it work out the “how” and you’re back to square one again.

I think it can best be put in song

Or put another way: how do you make a bear proof trashcan that can defeat a bear but not the dumbest of humans?

As you said, a 44k monthly active users plateform is probably not worth investing time from spammers and agenda pushers.

If at some point we’ll make it, we’ll see. Seems like we are still quite far.

You say that, but they’re already here. I see completely automated commercial spam posts every few days. And we all know there’s already political agenda-pushers. Hell, Lemmy was created by some.

see completely automated commercial spam posts every few days.

Don’t get those accounts banned quite fast?

And we all know there’s already political agenda-pushers. Hell, Lemmy was created by some.

It’s community-dependent. Lemmy.ml communities are far from being the most popular on Lemmy: https://lemmyverse.net/communities?order=active_month